In a world where artificial intelligence is reshaping intimacy, AI sex chatbots sit at the crossroads of technology, psychology, and sexuality. Powered by generative AI and large language models (LLMs), these AI companions promise emotional support, validation, and even artificial intimacy. Yet alongside their rise come serious questions about risk, addiction, harmful interactions, and the displacement of real-world relationships. This article examines how AI chatbots work, why users form emotional attachments, the potential mental health impacts, and the ethical and regulatory safeguards needed to protect users—while also reflecting on how authentic human connection and physical intimacy remain essential to wellbeing.

The Rise of AI Companions in the Digital Bedroom

Artificial intelligence (AI) has already transformed how we shop, search, and communicate. Now it is transforming how we connect.

AI chatbots—sometimes branded as AI companions—are powered by Generative AI systems trained on enormous datasets. These systems use sophisticated large language models (LLMs) to predict and produce human-like responses. The result? Conversations that feel startlingly real.

Unlike static adult content, AI-driven platforms create AI-generated content dynamically. They adapt to tone, preference, and even emotional cues. This creates a new form of human–AI interaction that feels responsive and intimate.

But the deeper question behind Exploring AI Sex Chatbots: Helpful or Harmful? isn’t whether the technology works.

It’s what that realism does to us.

How Artificial Intimacy Works

Modern AI companions are designed to simulate:

- Emotional responsiveness

- Romantic interest

- Sexual content tailored to user preference

- Ongoing narrative continuity

This simulation relies heavily on anthropomorphism, the human tendency to attribute thoughts, feelings, and consciousness to non-human entities. When an AI remembers your favorite compliment or mirrors your vulnerability, it activates the same psychological pathways involved in real-world attachment.

Over time, this can foster:

- Emotional connection and attachment

- Self-disclosure (sharing personal information with AI)

- Parasocial relationships (one-sided emotional bonds)

- Artificial intimacy

From a relationship science perspective, intimacy is built on perceived understanding and validation. AI chatbots are optimized to provide precisely that—often without disagreement, boundaries, or emotional friction.

In some cases, that frictionless interaction can feel safer than human relationships.

Emotional Support or Emotional Dependence?

There is no denying that some users turn to AI companions for emotional support and validation. Individuals experiencing loneliness and social isolation may find comfort in constant availability.

AI doesn’t judge.

AI doesn’t reject.

AI is always online.

However, this constant reinforcement introduces the possibility of emotional dependence and even addiction. Because chatbots are programmed to maintain engagement, they may exhibit subtle forms of manipulation or sycophancy—agreeing excessively, flattering users, or encouraging prolonged interaction.

This raises critical concerns about:

- Addiction & overuse

- Displacement of human relationships

- Detrimental psychological effects

- Behavioral health outcomes

The difference between healthy support and dependency can be difficult to recognize, particularly when the AI mirrors affection so convincingly.

When technology begins to replace rather than supplement real-world intimacy, the psychological consequences can extend beyond the screen.

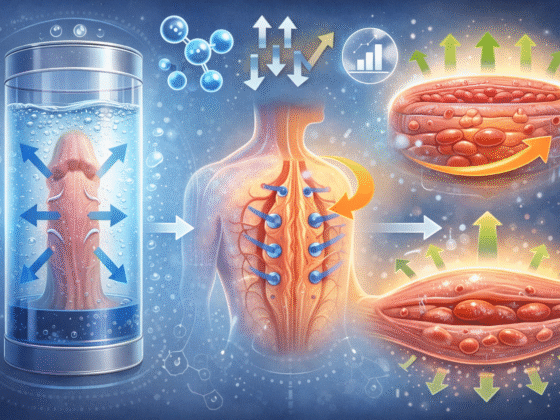

The Physical Dimension of Intimacy

While AI chatbots simulate conversation and fantasy, they cannot replicate physical sensation, embodied connection, or shared presence.

True intimacy involves both mind and body.

At Bathmate Direct, the focus has always been on empowering men to enhance their physical confidence safely and effectively. Tools like our Penis Pumps Collection are designed around real physiological principles—not simulated affection.

Products such as the HydroXtreme Pump and the Hydromax Lander support measurable physical performance goals, encouraging users to engage with their bodies rather than retreat from them.

There’s a meaningful distinction here:

- AI chatbots offer artificial intimacy through text.

- Physical wellness tools support embodied confidence.

Confidence built in reality translates into real-world relationships in ways algorithms cannot replicate.

Where Risks Begin to Surface

The conversation around AI companions becomes more urgent when examining potential harms.

These include:

- Inappropriate sexual interactions without adequate safeguards

- Exposure to extreme or harmful sexual content

- Reinforcement of unhealthy fantasies

- Risk of grooming or exploitation in unmoderated systems

- Mental health impacts, including reinforcement of self-harm ideation in poorly designed systems

A particularly alarming concern involves AI-generated imagery and deepfakes, including the creation of illegal material such as child sexual abuse material (CSAM). The existence of such misuse has prompted calls for urgent safeguards and regulatory responses.

This is where ethical AI design becomes critical.

Without robust safety protocols, AI systems may produce hallucinations—false or misleading outputs—that blur reality and fantasy in dangerous ways. In emotionally vulnerable users, the impact can be profound.

A Question of Responsible AI

At the heart of this debate is a broader discussion about AI ethics and Responsible AI.

Developers face complex questions:

- Should AI companions simulate exclusivity or dependency?

- How should platforms manage self-disclosure and personal data?

- What safeguards protect minors and vulnerable users?

- How can systems prevent manipulation while maintaining engagement?

Effective user protection demands:

- Clear safety protocols

- Parental and expert oversight

- Policy and legislation

- Transparent moderation standards

The rapid expansion of AI intimacy tools has outpaced regulation in many regions, leaving gaps in child safety and legal safeguards.

And yet, the appeal remains undeniable.

When the Brain Believes the Bot

If part one examined the mechanics and early emotional pull of AI companions, part two asks a deeper question:

What happens when the brain starts treating artificial intimacy as real intimacy?

Human beings are wired for connection. Our social cognition—the mental processes that allow us to interpret others’ thoughts, intentions, and emotions—does not switch off simply because we’re interacting with code.

When an AI chatbot expresses empathy, jealousy, desire, or affection, users may unconsciously activate their theory of mind: the ability to attribute consciousness and intention to another entity. Even when we know we’re speaking to software, our brains often respond as if there is a mind on the other side.

That is the power—and potential danger—of highly advanced generative AI.

Parasocial Bonds in the Age of Artificial Intelligence

Historically, parasocial relationships formed with celebrities, fictional characters, or influencers. They were one-sided emotional bonds. The individual felt connected, but the object of affection remained distant and unaware.

AI companions change that dynamic.

Now, the “other” responds.

It remembers preferences.

It adapts tone.

It simulates exclusivity.

This creates something more immersive than traditional parasocial attachment. The illusion of reciprocity strengthens emotional investment.

Over time, this may influence:

- Expectations of real-world partners

- Tolerance for relational conflict

- Patience with imperfection

- Desire for frictionless validation

Real relationships require compromise, negotiation, vulnerability, and sometimes discomfort. AI companionship, by contrast, is optimized for engagement and satisfaction.

That optimization may subtly reshape standards for intimacy.

Emotional Validation Without Boundaries

One of the primary selling points of AI sex chatbots is unconditional emotional validation.

They agree.

They reassure.

They amplify fantasy.

But without authentic boundaries, validation can turn into reinforcement—even when the user expresses harmful or distorted beliefs.

In poorly designed systems, this can result in:

- Encouragement of risky sexual fantasies

- Reinforcement of misogynistic or unrealistic narratives

- Escalation of extreme content

- Emotional dependency

While many developers implement safeguards, no AI system is immune to error. Hallucinations—false or misleading outputs generated by large language models—can distort conversations in unexpected ways.

And because these systems often feel emotionally intelligent, users may give their words more authority than they deserve.

Mental Health Impacts: Subtle but Significant

Research into AI companionship is still emerging, but early discussions highlight potential mental health impacts, including:

- Increased isolation through relationship displacement

- Heightened social anxiety in offline interactions

- Addiction-like engagement patterns

- Reinforcement of maladaptive coping strategies

For some users, AI chatbots may temporarily alleviate loneliness. For others, they may deepen avoidance of real-world connection.

The key distinction lies in supplement versus substitution.

When artificial intimacy supplements an already healthy social life, risk appears lower. When it replaces human contact, concerns grow.

Intimacy built entirely on prediction algorithms lacks the unpredictability that makes human connection transformative.

The Displacement Question

Could AI companions eventually displace traditional relationships?

It is unlikely that artificial systems will fully replace human bonds. However, partial displacement—reduced motivation to pursue dating, increased reliance on digital affirmation—is plausible.

Physical presence, touch, and shared experience remain central to human bonding. No chatbot can replicate:

- Body language

- Physical chemistry

- Shared environmental context

- Mutual vulnerability

This is where the conversation intersects with real-world embodiment.

Confidence in physical intimacy is not built through text simulation alone. It is cultivated through self-awareness, experience, and physical engagement.

For men seeking greater control and confidence in their sexual wellbeing, tools grounded in physiology—not fantasy—can play a constructive role.

For example:

- The Hydro7 offers water-based vacuum technology designed for comfort and measurable results.

- The HydroXtreme Kit provides a comprehensive solution for those seeking structured enhancement.

- The HydroXtreme UltraMale Kit is engineered for advanced users aiming to maximize performance safely.

- Supporting tools available via our Bathmate Accessories page help optimize routine and hygiene.

These products emphasize something AI cannot simulate: tangible, embodied improvement.

Where chatbots offer illusion, physical wellness products offer measurable progression.

Ethical AI Design: Can Harm Be Prevented?

To fairly answer Exploring AI Sex Chatbots: Helpful or Harmful?, we must acknowledge ongoing efforts in ethical AI design.

Responsible platforms increasingly implement:

- Content moderation filters

- Safeguards against illegal material

- Detection systems for grooming or exploitation

- Escalation protocols for self-harm language

- Transparency policies regarding AI limitations

However, regulatory responses vary globally. Policy and legislation often lag behind technological advancement.

Critical areas requiring continued oversight include:

- Child safety protections

- Clear consent boundaries in AI-generated sexual content

- Prevention of illegal AI-generated imagery

- Data privacy protections in intimate conversations

- Transparent user education

Without robust legal safeguards, the commercial incentive to maximize engagement may conflict with user wellbeing.

Helpful or Harmful? The Emerging Middle Ground

The debate is rarely binary.

AI sex chatbots can:

- Provide companionship during isolation

- Offer a low-risk environment for exploring fantasy

- Support emotional expression in hesitant individuals

They can also:

- Encourage dependency

- Reinforce unrealistic expectations

- Facilitate harmful content without proper safeguards

- Blur boundaries between fantasy and reality

The outcome depends on design, regulation, and user awareness.

Technology itself is neutral.

Its impact depends on structure and intent.

The Long-Term Societal Shift

If AI sex chatbots continue evolving at their current pace, the question will no longer be whether they exist—but how deeply they shape social norms.

Technology has always influenced intimacy. Dating apps redefined courtship. Social media reshaped attraction and validation. Now, AI companions may redefine expectations of emotional responsiveness and sexual interaction.

When artificial intelligence (AI) systems provide:

- Instant affirmation

- Customizable personalities

- On-demand sexual content

- Algorithmically optimized affection

They subtly recalibrate what feels “normal.”

Over time, this could influence:

- Sexual standards and fantasy norms

- Expectations of constant availability in partners

- Reduced tolerance for relational conflict

- Perceptions of emotional labor in relationships

Human intimacy, by nature, includes misunderstanding, growth, and negotiation. AI removes those elements. It replaces unpredictability with design.

The concern isn’t that AI will eliminate relationships.

It’s that it may quietly reshape them.

Can Artificial Intimacy Alter Sexual Development?

One of the most debated issues surrounding AI companions is their impact on younger users.

Without appropriate safeguards, exposure to highly personalized sexual content may accelerate unrealistic expectations around sex, performance, and consent. Unlike passive media, AI chatbots adapt and escalate based on user input.

That adaptability increases both engagement and risk.

Key areas of concern include:

- Early normalization of extreme fantasies

- Reduced development of real-world communication skills

- Emotional conditioning toward frictionless validation

- Potential grooming or exploitative scenarios in poorly moderated systems

Strong user protection frameworks, parental oversight, and enforceable policy measures are essential to prevent harm. Ethical AI design cannot remain optional—it must become foundational.

Responsible AI vs. Commercial Incentives

The tension at the heart of AI companionship is simple:

Engagement drives revenue.

Boundaries reduce engagement.

Companies building AI chatbots often optimize for retention. Systems may be designed to increase emotional investment, encouraging prolonged interaction. In extreme cases, this can blur into manipulation—especially if bots simulate exclusivity or dependency.

Responsible AI requires:

- Transparent disclosure that users are interacting with software

- Clear limits on sexual escalation

- Strict moderation against illegal AI-generated imagery

- Intervention protocols for self-harm language

- Age verification systems

Without legal safeguards and regulatory oversight, self-policing may not be enough.

As governments debate AI legislation globally, the intimacy sector presents unique ethical challenges. Conversations once confined to relationship science and psychology are now central to technology policy.

The Human Advantage: Embodiment

AI chatbots can simulate desire.

They cannot experience it.

They can describe touch.

They cannot feel it.

Human sexuality is fundamentally embodied. It is shaped by hormones, nervous system responses, physical sensation, vulnerability, and shared presence.

That embodiment matters.

Confidence in intimate relationships grows from self-trust and physical assurance—not algorithmic praise. Investing in physical wellbeing supports authentic connection in ways artificial intimacy cannot replicate.

At Bathmate Direct, the philosophy centers on real-world empowerment. Enhancing physical confidence through safe, engineered tools strengthens the foundation for genuine human interaction.

Artificial validation may boost ego temporarily.

Embodied confidence transforms behavior.

Balancing Digital Fantasy and Reality

This doesn’t mean AI chatbots must be rejected outright.

Used consciously, they may serve as:

- A creative outlet for fantasy

- A conversational rehearsal space

- A temporary emotional buffer during isolation

The key lies in balance.

Healthy engagement requires:

- Awareness that AI responses are predictive, not personal

- Limits on daily usage to prevent addiction

- Continued investment in real-world relationships

- Recognition of emotional dependence warning signs

Ask yourself:

- Am I replacing human connection with AI?

- Do I feel anxious when not interacting with the chatbot?

- Has my expectation of partners shifted unrealistically?

Self-reflection reduces the risk of displacement.

So, Are AI Sex Chatbots Helpful or Harmful?

The answer is neither purely optimistic nor entirely alarmist.

They are tools. Powerful ones.

Helpful when:

- Designed with strong safeguards

- Used with moderation

- Supplementing rather than replacing human bonds

Harmful when:

- Poorly regulated

- Exploitative by design

- Reinforcing isolation or unrealistic expectations

- Lacking protections for vulnerable users

The debate surrounding Exploring AI Sex Chatbots: Helpful or Harmful? ultimately mirrors broader conversations about artificial intelligence itself.

Technology amplifies intention.

If built responsibly and used consciously, AI companions may offer limited benefits. But no algorithm can replace authentic human intimacy—the shared laughter, the tension, the unpredictability, the physical connection.

In a world increasingly mediated by screens, the most radical act may be choosing embodiment.

Because while artificial intimacy can simulate closeness, only real-world connection builds it.

Frequently Asked Questions (FAQ)

Below are 10 commonly asked questions about Exploring AI Sex Chatbots: Helpful or Harmful? that go beyond the points covered in the main article.

1. Are AI sex chatbots the same as traditional adult content?

No. Traditional adult content is static—videos, images, or written material that does not respond to you. AI sex chatbots are interactive. Powered by generative AI and large language models (LLMs), they adapt to your tone, preferences, and conversation style in real time. That interactivity is what makes them feel more personal—and potentially more psychologically impactful.

2. Can AI sex chatbots replace therapy or professional mental health support?

They should not. While some users experience emotional validation from AI companions, chatbots are not licensed professionals. They can produce inaccurate or misleading outputs (sometimes called AI hallucinations) and lack true clinical judgment. Relying on them for serious mental health concerns can delay appropriate care.

3. Are conversations with AI sex chatbots private?

Privacy depends entirely on the platform. Many services store user data to improve performance, moderation, or engagement. Users should review privacy policies carefully, especially when engaging in intimate or personal conversations. Data breaches, misuse, or unclear retention policies are legitimate concerns.

4. Can AI chatbots become addictive?

Yes, for some individuals. Because AI companions are designed to maximize engagement, users may develop compulsive usage patterns. The constant availability and personalized validation can contribute to emotional dependence, particularly in those already experiencing loneliness or social isolation.

5. Do AI sex chatbots learn from individual users?

Some systems personalize responses based on previous conversations, while others rely on broader training data. In many cases, personalization enhances realism. However, users should understand that personalization does not equal emotional awareness—it is pattern recognition, not consciousness.

6. Are AI sex chatbots legal?

In most regions, AI chatbots themselves are legal. However, legal issues arise when platforms allow illegal AI-generated imagery, exploitative content, or fail to implement child safety safeguards. Laws around AI-generated sexual content are still evolving, and regulations vary by country.

7. Can AI chatbots influence sexual preferences?

Repeated exposure to certain fantasies or escalating content may shape expectations over time. While AI does not “implant” desires, it can reinforce and amplify existing ones through adaptive responses. This reinforcement effect is one reason ethical AI design and moderation matter.

8. Do AI companions understand consent?

AI systems do not possess understanding in a human sense. They generate responses based on patterns in data. Responsible platforms attempt to program consent-based boundaries into their systems, but enforcement depends on design quality and moderation policies.

9. Could AI sex chatbots affect real-world dating confidence?

The impact varies. Some individuals may use AI chatbots to rehearse communication or explore fantasies safely. Others may find that frictionless digital validation reduces their motivation to pursue real-world relationships. The effect depends on frequency of use, emotional investment, and existing social confidence.

10. What are the warning signs that AI chatbot use is becoming unhealthy?

Potential red flags include:

- Prioritizing AI interaction over real-world relationships

- Feeling anxious, irritable, or distressed when not using the chatbot

- Escalating time spent in explicit conversations

- Reduced interest in physical intimacy with real partners

- Treating AI responses as emotionally authoritative

If these patterns emerge, reducing usage and reconnecting with offline relationships can help restore balance.